Despite technology

such as interaction analytics being available to monitor 100% of calls, the

majority of UK contact centres still have team leaders and line managers

involved in scoring agent calls manually, with 80% of respondents from large

operations having a specific, dedicated quality team involved as well. Large

and medium operations are also more likely to have coaches evaluating calls,

which will also feed into the process of understanding each individuals’ need

for specific improvement, as well as developing the wider training program. 45%

of respondents from large operations have a compliance team evaluating calls,

and are much more likely to use a business process improvement team as well to

learn from the quality assurance (QA) output.

Despite technology

such as interaction analytics being available to monitor 100% of calls, the

majority of UK contact centres still have team leaders and line managers

involved in scoring agent calls manually, with 80% of respondents from large

operations having a specific, dedicated quality team involved as well. Large

and medium operations are also more likely to have coaches evaluating calls,

which will also feed into the process of understanding each individuals’ need

for specific improvement, as well as developing the wider training program. 45%

of respondents from large operations have a compliance team evaluating calls,

and are much more likely to use a business process improvement team as well to

learn from the quality assurance (QA) output.

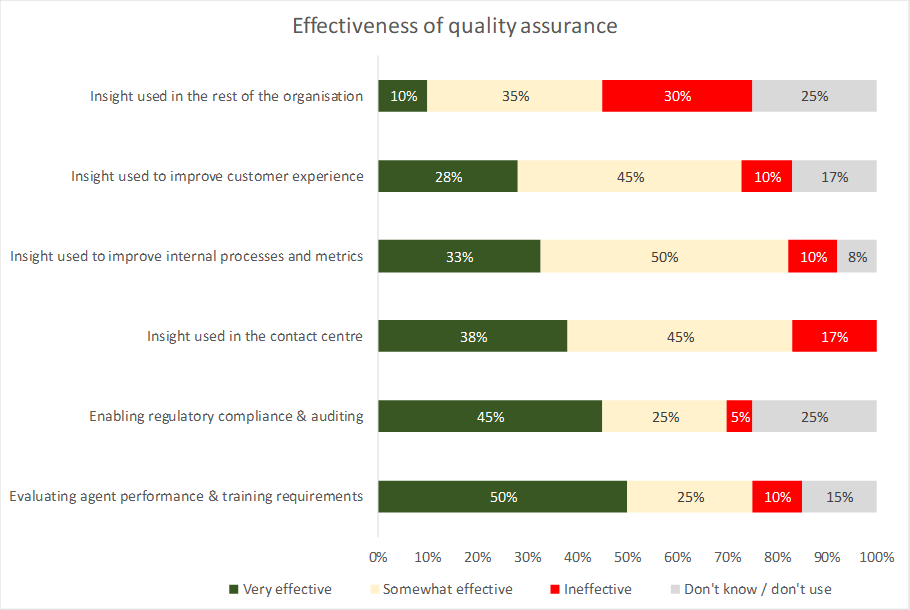

When respondents were

asked about how effective their QA processes are, it is noticeable that more of

these respondents are lukewarm about the results than are actively

enthusiastic: only “enabling regulatory compliance and auditing” and “evaluating

agent performance & training requirements” had more respondents judging the

QA process as ‘very effective’ rather than merely ‘fairly effective’ for this

purpose, showing that there is still a need for improved functionality.

Only 28% feel that QA drives customer experience improvements significantly, which shows that there is still quite a way to go. Customer insight gained from the quality assurance process stands a very significant risk of not being used effectively within the wider organisation, although the feeling is that it does generally help the outcome at agent level.

As such, it seems fair to comment that QA is currently used far more effectively and widely as a tool for improving compliance, agent productivity and skills, rather than as input into strategic business improvements.

Interaction analytics tries to take the guesswork out of improving customer experience, agent performance and customer insight. By moving from anecdote-based decisions, from qualitative to quantitative information, some order is put on the millions of interactions that many large contact centres have in their recording systems, improving the reliability of the intelligence provided to decision-makers. The need to listen to calls is still there, but those listened to are far more likely to be the right ones, whether for agent evaluation or business insight.

Organisations using customer contact analytics can carry out an evaluation of chosen calls - for example, unhappy customers - the results of which can be then be fed back into the existing quality assurance process. These are then treated in the same way, without upheaval or any need for altering the QA/QM process, only improving the quality and accuracy of the data used by the existing solution.

Being able to monitor 100% of calls with 100% of agents means that it is possible to make sure that agents comply with all business rules as well as regulations. Linking this information with metadata such as call outcomes, sales success rates and other business metrics means that the most successful behaviours and characteristics can be identified and shared across agent groups.

Customer interaction analytics is of great potential value to a business in terms of discovery, compliance and business process optimisation, but the improvements that the outputs from analytics can offer to other elements of the WFO suite, such as agent performance and training should not be overlooked. Scorecards based on 100% of calls rather than a small sample are much more accurate, and support better training and eLearning techniques, and have great potential to cut the cost of manually QAing calls. Analysing all interactions also means that QA professionals are made aware of any outliers - either very good or very bad customer communications – providing great opportunities for the propagation of best practice, or identifying urgent training needs, respectively.

By monitoring and scoring 100% of calls, the opportunity exists to connect analytics, quality assurance and performance management, collecting information about, for example, first-contact resolution rates, right down to the individual agent level. Automatic evaluation of all calls means that businesses will no longer rely on anecdotal evidence, and will be able to break the call down into constituent parts, studying and optimising each element of each type of call, offering a far more scientific, evidence-based approach to improving KPIs than has previously been possible. Solution providers also believe that embedding analytics more closely into WFO is relatively culturally unchallenging (for the QA team at least), in that the operation is automating and improving something that they’ve done for many years.